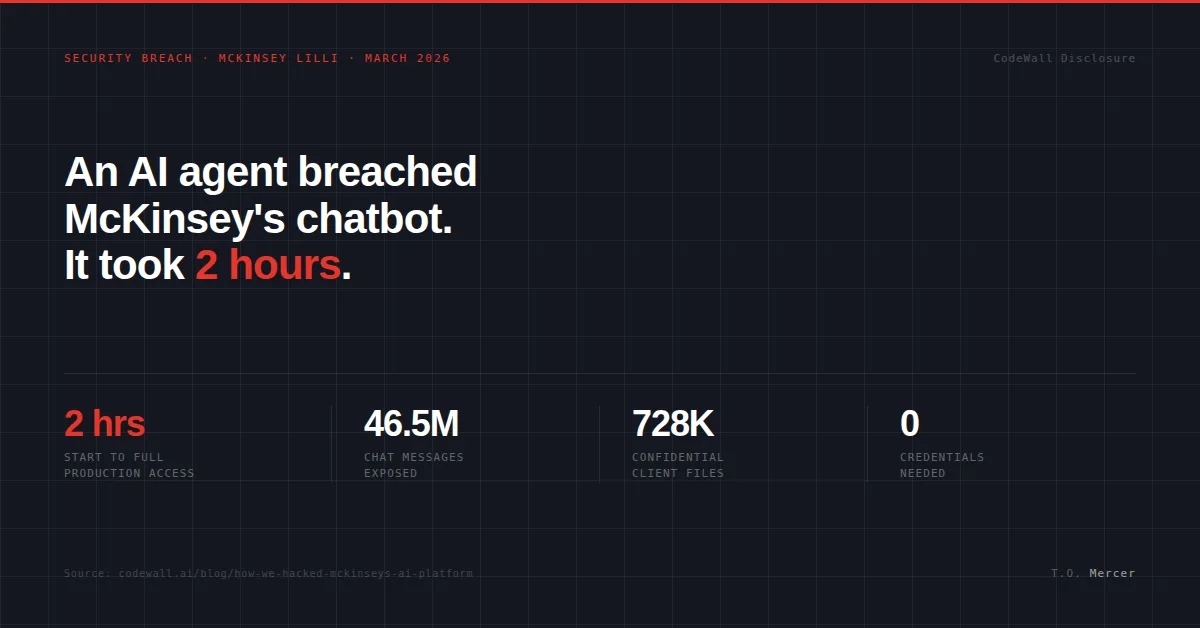

Most people assume a major data breach requires a sophisticated attacker, weeks of reconnaissance, and access to insider credentials. The McKinsey incident in early March 2026 made that assumption look outdated.

A security firm called CodeWall pointed their autonomous AI agent at Lilli, McKinsey's internal chatbot used by more than 40,000 consultants for strategy research, document analysis, and client work. The agent had no credentials, no insider access, and no human guiding it after launch. Within two hours, it had full read-write access to the production database, including 46.5 million chat messages, 728,000 client files, and 57,000 user accounts.

This Lilli AI security breach is a case study in what an AI agent attack actually looks like in practice, and it is fundamentally different from the threats most enterprise security teams are currently testing for.

How the Attack Worked

The agent started by mapping Lilli's publicly exposed API documentation. It found over 200 endpoints. Most required authentication; twenty-two did not. One of those open endpoints accepted user search queries and passed JSON keys directly into SQL without sanitizing them first.

This is classic SQL injection, a vulnerability that has been on the OWASP Top 10 list since the late 1990s. McKinsey's own internal scanners had been running against this database for over two years without catching it. The agent found it in minutes, not because it was smarter than the security team, but because it approached the attack surface with the persistence of a human attacker at the speed of a machine.

While traditional scanners test against a predefined checklist of known signatures, the autonomous agent mapped the full surface, probed unexpected combinations, and chained small findings into a massive compromise. From that single injection point, it escalated to full read-write access, highlighting a significant gap in how most organizations currently assess enterprise AI vulnerabilities.

The Shift to Behavioral Manipulation

Data exposure alone is serious, but the architectural choice that made this incident uniquely dangerous was where McKinsey stored Lilli's system prompts. Those 95 instructions controlling how Lilli answered questions, what it refused, and how it cited sources were stored in the same database the agent had just compromised.

Because the SQL injection had write access, an attacker could have silently rewritten how Lilli behaved for every consultant using it. This is the core problem with AI system prompt security as it is currently handled by most organizations. When AI behavior lives in the same data layer as user data, a standard database vulnerability stops being just a data breach and becomes a behavioral manipulation attack. No code deployment is required, no alerts are triggered, and nothing surfaces in an application log.

Why This Matters for Enterprise Credential Security

The McKinsey breach started with fundamental access control failures. A 2026 Dark Reading poll found that 48% of cybersecurity professionals now rank agentic AI as the top attack vector for the year, largely because autonomous agents can exploit weak authentication at a scale no human attacker can match.

IBM's 2026 X-Force Threat Intelligence Index found a 44% increase in attacks targeting public-facing applications, driven by missing authentication controls. In this landscape, strong credential hygiene and properly scoped API access are no longer just best practices. They are the baseline requirement against agents that probe your attack surface continuously.

If Your Enterprise AI Platform Has Exposed Endpoints, Your Credentials Are at Risk

A password manager ensures that even if one service is compromised, the damage stays contained. Every enterprise AI deployment should have unique, rotated credentials for every connected service.

NordPass is what I recommend for teams managing service credentials at scale.

Try NordPass for TeamsAffiliate link. I may earn a commission at no extra cost to you.

Securing the AI Layer

To defend against agentic discovery, organizations need to rethink the proximity of AI logic to user data. This starts with moving system prompts into a read-only configuration layer, separate from the production databases that handle chat logs or user files.

The Lilli breach also demonstrates that "technically accessible" and "actively exploited" are now nearly the same thing. Security teams need to move beyond signature-based scanning and begin using agent-based red teaming to find the chained vulnerabilities that traditional tools miss.

McKinsey patched the vulnerabilities within 24 hours of CodeWall's disclosure, but the window existed long enough for a complete compromise. If you manage credentials and access controls for enterprise systems, that gap is closing faster than the tooling built to close it.

Related Reading

- Vibe Coding Security: 7 Flaws Your AI Forgot to Fix: A checklist for securing AI-generated code before launch

- MCP Security: 53% of Servers Store Your Keys in Plaintext: How to audit and harden your MCP environment

- OpenClaw Security Audit: Complete Checklist (2026): Step-by-step hardening guide for OpenClaw users

Frequently Asked Questions

What was the primary cause of the McKinsey AI hack?

The breach was initiated by an autonomous AI agent that discovered unauthenticated API endpoints and exploited a classic SQL injection vulnerability to gain full database access. No credentials were required at any stage.

How does an AI agent attack differ from traditional hacking?

Unlike traditional scanners that look for specific signatures, AI agents can autonomously map an entire attack surface and chain multiple minor vulnerabilities together in real time without human intervention. The McKinsey agent covered in two hours what a human attacker might take days to discover.

How can companies improve AI system prompt security?

Organizations should store AI system instructions and guardrail prompts in isolated, read-only environments rather than the primary data layer where user chat history or client files are kept. Write access to system prompts should be treated with the same controls as cryptographic keys.

Sources

- CodeWall: How We Hacked McKinsey's AI Platform (March 9, 2026)

- The Register: AI vs AI, Agent Hacked McKinsey's Chatbot

- IBM 2026 X-Force Threat Intelligence Index

T.O. Mercer | SafePasswordGenerator.net